Podcasts about Generalization

- 421PODCASTS

- 568EPISODES

- 43mAVG DURATION

- 1WEEKLY EPISODE

- Feb 13, 2026LATEST

POPULARITY

Best podcasts about Generalization

Latest news about Generalization

- What to Do If You Take AGI Seriously LessWrong - Feb 17, 2026

- What Enables Generalization in Time Series Forecasting? Lessons from LLIAM — Divwardhan Agrawal Deep Learning on Medium - Feb 1, 2026

- The Unprompted Spark: Why Details Matter in an Age of Generalization Artificial Intelligence on Medium - Jan 27, 2026

- Corrupting LLMs Through Weird Generalizations Schneier on Security - Jan 12, 2026

- The Mathematical Skills of Generalization and Abstraction Technology on Medium - Dec 22, 2025

- Adaptable Drupal modules: code meant to be adapted, not installed Dries Buytaert - Dec 18, 2025

- Thoughts on AI progress and why AI labs' actions hint at a worldview in which AI models will continue to fare poorly at generalization and on-the-job learning (Dwarkesh Patel/Dwarkesh Podcast) Techmeme - Dec 4, 2025

- Generalization bias in large language model summarization of scientific research Stephen's Web ~ OLDaily - Nov 17, 2025

- Four generalizations of the Pythagorean theorem John D. Cook - Nov 13, 2025

- Researchers isolate memorization from reasoning in AI neural networks Biz & IT – Ars Technica - Nov 10, 2025

Latest podcast episodes about Generalization

Neuro Navigators Episode 24: Can Motor Performance Be Driven By Cognition? The CO-OP Approach

Helene Polatajko, PhD, OT(C), FCAOT, FCAHS, LLD(h.c.), OC, a renowned occupational therapist, researcher, and co-developer of the CO-OP Approach, joins host J.J. Mowder-Tinney for a thought-provoking conversation on how cognition can drive motor performance. Together, they explore the power of guided discovery, client-centered goals, and the surprising role of self-generated strategies in rehabilitation. From dancing to dressing to stroke recovery, you'll hear how thinking differently about movement can change what your patients are capable of. Tune in to reframe your clinical lens and get inspired to incorporate “goal-plan-do-check” into your own sessions.Learning ObjectivesAnalyze the evidence around CO-OP approaches to meaningful activities across pediatric and adult populationsApply evidence-based, practical strategies to actionably address challenges in occupationalSolve patient case scenarios involving clients with coordination or motor learning impairmentTimestamps(00:00:00) Welcome(00:00:05) Introduction to cognition and motor-based performance(00:01:20) Dr. Polatajko's background and journey in occupational therapy(00:05:30) The self-driving car(00:12:40) Cognitive orientation to daily occupational performance (CO-OP)(00:14:35) Dynamic performance analysis(00:22:15) Guided discovery(00:30:24) Generalization and transfer of skills(00:34:14) Goal-plan-do-check(00:53:25) Key takeaways and conclusionNeuro Navigators is brought to you by Medbridge. If you'd like to earn continuing education credit for listening to this episode and access bonus takeaway handouts, log in to your Medbridge account and navigate to the course where you'll find accreditation details. If applicable, complete the post-course assessment and survey to be eligible for credit. The takeaway handout on Medbridge gives you the key points mentioned in this episode, along with additional resources you can implement into your practice right away.To hear more episodes of Neuro Naviagators, visit https://www.medbridge.com/neuro-navigatorsIf you'd like to subscribe to Medbridge, visit https://www.medbridge.com/pricing/IG: https://www.instagram.com/medbridgeteam/

The First Mechanistic Interpretability Frontier Lab — Myra Deng & Mark Bissell of Goodfire AI

From Palantir and Two Sigma to building Goodfire into the poster-child for actionable mechanistic interpretability, Mark Bissell (Member of Technical Staff) and Myra Deng (Head of Product) are trying to turn “peeking inside the model” into a repeatable production workflow by shipping APIs, landing real enterprise deployments, and now scaling the bet with a recent $150M Series B funding round at a $1.25B valuation.In this episode, we go far beyond the usual “SAEs are cool” take. We talk about Goodfire's core bet: that the AI lifecycle is still fundamentally broken because the only reliable control we have is data and we post-train, RLHF, and fine-tune by “slurping supervision through a straw,” hoping the model picks up the right behaviors while quietly absorbing the wrong ones. Goodfire's answer is to build a bi-directional interface between humans and models: read what's happening inside, edit it surgically, and eventually use interpretability during training so customization isn't just brute-force guesswork.Mark and Myra walk through what that looks like when you stop treating interpretability like a lab demo and start treating it like infrastructure: lightweight probes that add near-zero latency, token-level safety filters that can run at inference time, and interpretability workflows that survive messy constraints (multilingual inputs, synthetic→real transfer, regulated domains, no access to sensitive data). We also get a live window into what “frontier-scale interp” means operationally (i.e. steering a trillion-parameter model in real time by targeting internal features) plus why the same tooling generalizes cleanly from language models to genomics, medical imaging, and “pixel-space” world models.We discuss:* Myra + Mark's path: Palantir (health systems, forward-deployed engineering) → Goodfire early team; Two Sigma → Head of Product, translating frontier interpretability research into a platform and real-world deployments* What “interpretability” actually means in practice: not just post-hoc poking, but a broader “science of deep learning” approach across the full AI lifecycle (data curation → post-training → internal representations → model design)* Why post-training is the first big wedge: “surgical edits” for unintended behaviors likereward hacking, sycophancy, noise learned during customization plus the dream of targeted unlearning and bias removal without wrecking capabilities* SAEs vs probes in the real world: why SAE feature spaces sometimes underperform classifiers trained on raw activations for downstream detection tasks (hallucination, harmful intent, PII), and what that implies about “clean concept spaces”* Rakuten in production: deploying interpretability-based token-level PII detection at inference time to prevent routing private data to downstream providers plus the gnarly constraints: no training on real customer PII, synthetic→real transfer, English + Japanese, and tokenization quirks* Why interp can be operationally cheaper than LLM-judge guardrails: probes are lightweight, low-latency, and don't require hosting a second large model in the loop* Real-time steering at frontier scale: a demo of steering Kimi K2 (~1T params) live and finding features via SAE pipelines, auto-labeling via LLMs, and toggling a “Gen-Z slang” feature across multiple layers without breaking tool use* Hallucinations as an internal signal: the case that models have latent uncertainty / “user-pleasing” circuitry you can detect and potentially mitigate more directly than black-box methods* Steering vs prompting: the emerging view that activation steering and in-context learning are more closely connected than people think, including work mapping between the two (even for jailbreak-style behaviors)* Interpretability for science: using the same tooling across domains (genomics, medical imaging, materials) to debug spurious correlations and extract new knowledge up to and including early biomarker discovery work with major partners* World models + “pixel-space” interpretability: why vision/video models make concepts easier to see, how that accelerates the feedback loop, and why robotics/world-model partners are especially interesting design partners* The north star: moving from “data in, weights out” to intentional model design where experts can impart goals and constraints directly, not just via reward signals and brute-force post-training—Goodfire AI* Website: https://goodfire.ai* LinkedIn: https://www.linkedin.com/company/goodfire-ai/* X: https://x.com/GoodfireAIMyra Deng* Website: https://myradeng.com/* LinkedIn: https://www.linkedin.com/in/myra-deng/* X: https://x.com/myra_dengMark Bissell* LinkedIn: https://www.linkedin.com/in/mark-bissell/* X: https://x.com/MarkMBissellFull Video EpisodeTimestamps00:00:00 Introduction00:00:05 Introduction to the Latent Space Podcast and Guests from Goodfire00:00:29 What is Goodfire? Mission and Focus on Interpretability00:01:01 Goodfire's Practical Approach to Interpretability00:01:37 Goodfire's Series B Fundraise Announcement00:02:04 Backgrounds of Mark and Myra from Goodfire00:02:51 Team Structure and Roles at Goodfire00:05:13 What is Interpretability? Definitions and Techniques00:05:30 Understanding Errors00:07:29 Post-training vs. Pre-training Interpretability Applications00:08:51 Using Interpretability to Remove Unwanted Behaviors00:10:09 Grokking, Double Descent, and Generalization in Models00:10:15 404 Not Found Explained00:12:06 Subliminal Learning and Hidden Biases in Models00:14:07 How Goodfire Chooses Research Directions and Projects00:15:00 Troubleshooting Errors00:16:04 Limitations of SAEs and Probes in Interpretability00:18:14 Rakuten Case Study: Production Deployment of Interpretability00:20:45 Conclusion00:21:12 Efficiency Benefits of Interpretability Techniques00:21:26 Live Demo: Real-Time Steering in a Trillion Parameter Model00:25:15 How Steering Features are Identified and Labeled00:26:51 Detecting and Mitigating Hallucinations Using Interpretability00:31:20 Equivalence of Activation Steering and Prompting00:34:06 Comparing Steering with Fine-Tuning and LoRA Techniques00:36:04 Model Design and the Future of Intentional AI Development00:38:09 Getting Started in Mechinterp: Resources, Programs, and Open Problems00:40:51 Industry Applications and the Rise of Mechinterp in Practice00:41:39 Interpretability for Code Models and Real-World Usage00:43:07 Making Steering Useful for More Than Stylistic Edits00:46:17 Applying Interpretability to Healthcare and Scientific Discovery00:49:15 Why Interpretability is Crucial in High-Stakes Domains like Healthcare00:52:03 Call for Design Partners Across Domains00:54:18 Interest in World Models and Visual Interpretability00:57:22 Sci-Fi Inspiration: Ted Chiang and Interpretability01:00:14 Interpretability, Safety, and Alignment Perspectives01:04:27 Weak-to-Strong Generalization and Future Alignment Challenges01:05:38 Final Thoughts and Hiring/Collaboration Opportunities at GoodfireTranscriptShawn Wang [00:00:05]: So welcome to the Latent Space pod. We're back in the studio with our special MechInterp co-host, Vibhu. Welcome. Mochi, Mochi's special co-host. And Mochi, the mechanistic interpretability doggo. We have with us Mark and Myra from Goodfire. Welcome. Thanks for having us on. Maybe we can sort of introduce Goodfire and then introduce you guys. How do you introduce Goodfire today?Myra Deng [00:00:29]: Yeah, it's a great question. So Goodfire, we like to say, is an AI research lab that focuses on using interpretability to understand, learn from, and design AI models. And we really believe that interpretability will unlock the new generation, next frontier of safe and powerful AI models. That's our description right now, and I'm excited to dive more into the work we're doing to make that happen.Shawn Wang [00:00:55]: Yeah. And there's always like the official description. Is there an understatement? Is there an unofficial one that sort of resonates more with a different audience?Mark Bissell [00:01:01]: Well, being an AI research lab that's focused on interpretability, there's obviously a lot of people have a lot that they think about when they think of interpretability. And I think we have a pretty broad definition of what that means and the types of places that can be applied. And in particular, applying it in production scenarios, in high stakes industries, and really taking it sort of from the research world into the real world. Which, you know. It's a new field, so that hasn't been done all that much. And we're excited about actually seeing that sort of put into practice.Shawn Wang [00:01:37]: Yeah, I would say it wasn't too long ago that Anthopic was like still putting out like toy models or superposition and that kind of stuff. And I wouldn't have pegged it to be this far along. When you and I talked at NeurIPS, you were talking a little bit about your production use cases and your customers. And then not to bury the lead, today we're also announcing the fundraise, your Series B. $150 million. $150 million at a 1.25B valuation. Congrats, Unicorn.Mark Bissell [00:02:02]: Thank you. Yeah, no, things move fast.Shawn Wang [00:02:04]: We were talking to you in December and already some big updates since then. Let's dive, I guess, into a bit of your backgrounds as well. Mark, you were at Palantir working on health stuff, which is really interesting because the Goodfire has some interesting like health use cases. I don't know how related they are in practice.Mark Bissell [00:02:22]: Yeah, not super related, but I don't know. It was helpful context to know what it's like. Just to work. Just to work with health systems and generally in that domain. Yeah.Shawn Wang [00:02:32]: And Mara, you were at Two Sigma, which actually I was also at Two Sigma back in the day. Wow, nice.Myra Deng [00:02:37]: Did we overlap at all?Shawn Wang [00:02:38]: No, this is when I was briefly a software engineer before I became a sort of developer relations person. And now you're head of product. What are your sort of respective roles, just to introduce people to like what all gets done in Goodfire?Mark Bissell [00:02:51]: Yeah, prior to Goodfire, I was at Palantir for about three years as a forward deployed engineer, now a hot term. Wasn't always that way. And as a technical lead on the health care team and at Goodfire, I'm a member of the technical staff. And honestly, that I think is about as specific as like as as I could describe myself because I've worked on a range of things. And, you know, it's it's a fun time to be at a team that's still reasonably small. I think when I joined one of the first like ten employees, now we're above 40, but still, it looks like there's always a mix of research and engineering and product and all of the above. That needs to get done. And I think everyone across the team is, you know, pretty, pretty switch hitter in the roles they do. So I think you've seen some of the stuff that I worked on related to image models, which was sort of like a research demo. More recently, I've been working on our scientific discovery team with some of our life sciences partners, but then also building out our core platform for more of like flexing some of the kind of MLE and developer skills as well.Shawn Wang [00:03:53]: Very generalist. And you also had like a very like a founding engineer type role.Myra Deng [00:03:58]: Yeah, yeah.Shawn Wang [00:03:59]: So I also started as I still am a member of technical staff, did a wide range of things from the very beginning, including like finding our office space and all of this, which is we both we both visited when you had that open house thing. It was really nice.Myra Deng [00:04:13]: Thank you. Thank you. Yeah. Plug to come visit our office.Shawn Wang [00:04:15]: It looked like it was like 200 people. It has room for 200 people. But you guys are like 10.Myra Deng [00:04:22]: For a while, it was very empty. But yeah, like like Mark, I spend. A lot of my time as as head of product, I think product is a bit of a weird role these days, but a lot of it is thinking about how do we take our frontier research and really apply it to the most important real world problems and how does that then translate into a platform that's repeatable or a product and working across, you know, the engineering and research teams to make that happen and also communicating to the world? Like, what is interpretability? What is it used for? What is it good for? Why is it so important? All of these things are part of my day-to-day as well.Shawn Wang [00:05:01]: I love like what is things because that's a very crisp like starting point for people like coming to a field. They all do a fun thing. Vibhu, why don't you want to try tackling what is interpretability and then they can correct us.Vibhu Sapra [00:05:13]: Okay, great. So I think like one, just to kick off, it's a very interesting role to be head of product, right? Because you guys, at least as a lab, you're more of an applied interp lab, right? Which is pretty different than just normal interp, like a lot of background research. But yeah. You guys actually ship an API to try these things. You have Ember, you have products around it, which not many do. Okay. What is interp? So basically you're trying to have an understanding of what's going on in model, like in the model, in the internal. So different approaches to do that. You can do probing, SAEs, transcoders, all this stuff. But basically you have an, you have a hypothesis. You have something that you want to learn about what's happening in a model internals. And then you're trying to solve that from there. You can do stuff like you can, you know, you can do activation mapping. You can try to do steering. There's a lot of stuff that you can do, but the key question is, you know, from input to output, we want to have a better understanding of what's happening and, you know, how can we, how can we adjust what's happening on the model internals? How'd I do?Mark Bissell [00:06:12]: That was really good. I think that was great. I think it's also a, it's kind of a minefield of a, if you ask 50 people who quote unquote work in interp, like what is interpretability, you'll probably get 50 different answers. And. Yeah. To some extent also like where, where good fire sits in the space. I think that we're an AI research company above all else. And interpretability is a, is a set of methods that we think are really useful and worth kind of specializing in, in order to accomplish the goals we want to accomplish. But I think we also sort of see some of the goals as even more broader as, as almost like the science of deep learning and just taking a not black box approach to kind of any part of the like AI development life cycle, whether that. That means using interp for like data curation while you're training your model or for understanding what happened during post-training or for the, you know, understanding activations and sort of internal representations, what is in there semantically. And then a lot of sort of exciting updates that were, you know, are sort of also part of the, the fundraise around bringing interpretability to training, which I don't think has been done all that much before. A lot of this stuff is sort of post-talk poking at models as opposed to. To actually using this to intentionally design them.Shawn Wang [00:07:29]: Is this post-training or pre-training or is that not a useful.Myra Deng [00:07:33]: Currently focused on post-training, but there's no reason the techniques wouldn't also work in pre-training.Shawn Wang [00:07:38]: Yeah. It seems like it would be more active, applicable post-training because basically I'm thinking like rollouts or like, you know, having different variations of a model that you can tweak with the, with your steering. Yeah.Myra Deng [00:07:50]: And I think in a lot of the news that you've seen in, in, on like Twitter or whatever, you've seen a lot of unintended. Side effects come out of post-training processes, you know, overly sycophantic models or models that exhibit strange reward hacking behavior. I think these are like extreme examples. There's also, you know, very, uh, mundane, more mundane, like enterprise use cases where, you know, they try to customize or post-train a model to do something and it learns some noise or it doesn't appropriately learn the target task. And a big question that we've always had is like, how do you use your understanding of what the model knows and what it's doing to actually guide the learning process?Shawn Wang [00:08:26]: Yeah, I mean, uh, you know, just to anchor this for people, uh, one of the biggest controversies of last year was 4.0 GlazeGate. I've never heard of GlazeGate. I didn't know that was what it was called. The other one, they called it that on the blog post and I was like, well, how did OpenAI call it? Like officially use that term. And I'm like, that's funny, but like, yeah, I guess it's the pitch that if they had worked a good fire, they wouldn't have avoided it. Like, you know what I'm saying?Myra Deng [00:08:51]: I think so. Yeah. Yeah.Mark Bissell [00:08:53]: I think that's certainly one of the use cases. I think. Yeah. Yeah. I think the reason why post-training is a place where this makes a lot of sense is a lot of what we're talking about is surgical edits. You know, you want to be able to have expert feedback, very surgically change how your model is doing, whether that is, you know, removing a certain behavior that it has. So, you know, one of the things that we've been looking at or is, is another like common area where you would want to make a somewhat surgical edit is some of the models that have say political bias. Like you look at Quen or, um, R1 and they have sort of like this CCP bias.Shawn Wang [00:09:27]: Is there a CCP vector?Mark Bissell [00:09:29]: Well, there's, there are certainly internal, yeah. Parts of the representation space where you can sort of see where that lives. Yeah. Um, and you want to kind of, you know, extract that piece out.Shawn Wang [00:09:40]: Well, I always say, you know, whenever you find a vector, a fun exercise is just like, make it very negative to see what the opposite of CCP is.Mark Bissell [00:09:47]: The super America, bald eagles flying everywhere. But yeah. So in general, like lots of post-training tasks where you'd want to be able to, to do that. Whether it's unlearning a certain behavior or, you know, some of the other kind of cases where this comes up is, are you familiar with like the, the grokking behavior? I mean, I know the machine learning term of grokking.Shawn Wang [00:10:09]: Yeah.Mark Bissell [00:10:09]: Sort of this like double descent idea of, of having a model that is able to learn a generalizing, a generalizing solution, as opposed to even if memorization of some task would suffice, you want it to learn the more general way of doing a thing. And so, you know, another. A way that you can think about having surgical access to a model's internals would be learn from this data, but learn in the right way. If there are many possible, you know, ways to, to do that. Can make interp solve the double descent problem?Shawn Wang [00:10:41]: Depends, I guess, on how you. Okay. So I, I, I viewed that double descent as a problem because then you're like, well, if the loss curves level out, then you're done, but maybe you're not done. Right. Right. But like, if you actually can interpret what is a generalizing or what you're doing. What is, what is still changing, even though the loss is not changing, then maybe you, you can actually not view it as a double descent problem. And actually you're just sort of translating the space in which you view loss and like, and then you have a smooth curve. Yeah.Mark Bissell [00:11:11]: I think that's certainly like the domain of, of problems that we're, that we're looking to get.Shawn Wang [00:11:15]: Yeah. To me, like double descent is like the biggest thing to like ML research where like, if you believe in scaling, then you don't need, you need to know where to scale. And. But if you believe in double descent, then you don't, you don't believe in anything where like anything levels off, like.Vibhu Sapra [00:11:30]: I mean, also tendentially there's like, okay, when you talk about the China vector, right. There's the subliminal learning work. It was from the anthropic fellows program where basically you can have hidden biases in a model. And as you distill down or, you know, as you train on distilled data, those biases always show up, even if like you explicitly try to not train on them. So, you know, it's just like another use case of. Okay. If we can interpret what's happening in post-training, you know, can we clear some of this? Can we even determine what's there? Because yeah, it's just like some worrying research that's out there that shows, you know, we really don't know what's going on.Mark Bissell [00:12:06]: That is. Yeah. I think that's the biggest sentiment that we're sort of hoping to tackle. Nobody knows what's going on. Right. Like subliminal learning is just an insane concept when you think about it. Right. Train a model on not even the logits, literally the output text of a bunch of random numbers. And now your model loves owls. And you see behaviors like that, that are just, they defy, they defy intuition. And, and there are mathematical explanations that you can get into, but. I mean.Shawn Wang [00:12:34]: It feels so early days. Objectively, there are a sequence of numbers that are more owl-like than others. There, there should be.Mark Bissell [00:12:40]: According to, according to certain models. Right. It's interesting. I think it only applies to models that were initialized from the same starting Z. Usually, yes.Shawn Wang [00:12:49]: But I mean, I think that's a, that's a cheat code because there's not enough compute. But like if you believe in like platonic representation, like probably it will transfer across different models as well. Oh, you think so?Mark Bissell [00:13:00]: I think of it more as a statistical artifact of models initialized from the same seed sort of. There's something that is like path dependent from that seed that might cause certain overlaps in the latent space and then sort of doing this distillation. Yeah. Like it pushes it towards having certain other tendencies.Vibhu Sapra [00:13:24]: Got it. I think there's like a bunch of these open-ended questions, right? Like you can't train in new stuff during the RL phase, right? RL only reorganizes weights and you can only do stuff that's somewhat there in your base model. You're not learning new stuff. You're just reordering chains and stuff. But okay. My broader question is when you guys work at an interp lab, how do you decide what to work on and what's kind of the thought process? Right. Because we can ramble for hours. Okay. I want to know this. I want to know that. But like, how do you concretely like, you know, what's the workflow? Okay. There's like approaches towards solving a problem, right? I can try prompting. I can look at chain of thought. I can train probes, SAEs. But how do you determine, you know, like, okay, is this going anywhere? Like, do we have set stuff? Just, you know, if you can help me with all that. Yeah.Myra Deng [00:14:07]: It's a really good question. I feel like we've always at the very beginning of the company thought about like, let's go and try to learn what isn't working in machine learning today. Whether that's talking to customers or talking to researchers at other labs, trying to understand both where the frontier is going and where things are really not falling apart today. And then developing a perspective on how we can push the frontier using interpretability methods. And so, you know, even our chief scientist, Tom, spends a lot of time talking to customers and trying to understand what real world problems are and then taking that back and trying to apply the current state of the art to those problems and then seeing where they fall down basically. And then using those failures or those shortcomings to understand what hills to climb when it comes to interpretability research. So like on the fundamental side, for instance, when we have done some work applying SAEs and probes, we've encountered, you know, some shortcomings in SAEs that we found a little bit surprising. And so have gone back to the drawing board and done work on that. And then, you know, we've done some work on better foundational interpreter models. And a lot of our team's research is focused on what is the next evolution beyond SAEs, for instance. And then when it comes to like control and design of models, you know, we tried steering with our first API and realized that it still fell short of black box techniques like prompting or fine tuning. And so went back to the drawing board and we're like, how do we make that not the case and how do we improve it beyond that? And one of our researchers, Ekdeep, who just joined is actually Ekdeep and Atticus are like steering experts and have spent a lot of time trying to figure out like, what is the research that enables us to actually do this in a much more powerful, robust way? So yeah, the answer is like, look at real world problems, try to translate that into a research agenda and then like hill climb on both of those at the same time.Shawn Wang [00:16:04]: Yeah. Mark has the steering CLI demo queued up, which we're going to go into in a sec. But I always want to double click on when you drop hints, like we found some problems with SAEs. Okay. What are they? You know, and then we can go into the demo. Yeah.Myra Deng [00:16:19]: I mean, I'm curious if you have more thoughts here as well, because you've done it in the healthcare domain. But I think like, for instance, when we do things like trying to detect behaviors within models that are harmful or like behaviors that a user might not want to have in their model. So hallucinations, for instance, harmful intent, PII, all of these things. We first tried using SAE probes for a lot of these tasks. So taking the feature activation space from SAEs and then training classifiers on top of that, and then seeing how well we can detect the properties that we might want to detect in model behavior. And we've seen in many cases that probes just trained on raw activations seem to perform better than SAE probes, which is a bit surprising if you think that SAEs are actually also capturing the concepts that you would want to capture cleanly and more surgically. And so that is an interesting observation. I don't think that is like, I'm not down on SAEs at all. I think there are many, many things they're useful for, but we have definitely run into cases where I think the concept space described by SAEs is not as clean and accurate as we would expect it to be for actual like real world downstream performance metrics.Mark Bissell [00:17:34]: Fair enough. Yeah. It's the blessing and the curse of unsupervised methods where you get to peek into the AI's mind. But sometimes you wish that you saw other things when you walked inside there. Although in the PII instance, I think weren't an SAE based approach actually did prove to be the most generalizable?Myra Deng [00:17:53]: It did work well in the case that we published with Rakuten. And I think a lot of the reasons it worked well was because we had a noisier data set. And so actually the blessing of unsupervised learning is that we actually got to get more meaningful, generalizable signal from SAEs when the data was noisy. But in other cases where we've had like good data sets, it hasn't been the case.Shawn Wang [00:18:14]: And just because you named Rakuten and I don't know if we'll get it another chance, like what is the overall, like what is Rakuten's usage or production usage? Yeah.Myra Deng [00:18:25]: So they are using us to essentially guardrail and inference time monitor their language model usage and their agent usage to detect things like PII so that they don't route private user information.Myra Deng [00:18:41]: And so that's, you know, going through all of their user queries every day. And that's something that we deployed with them a few months ago. And now we are actually exploring very early partnerships, not just with Rakuten, but with other people around how we can help with potentially training and customization use cases as well. Yeah.Shawn Wang [00:19:03]: And for those who don't know, like it's Rakuten is like, I think number one or number two e-commerce store in Japan. Yes. Yeah.Mark Bissell [00:19:10]: And I think that use case actually highlights a lot of like what it looks like to deploy things in practice that you don't always think about when you're doing sort of research tasks. So when you think about some of the stuff that came up there that's more complex than your idealized version of a problem, they were encountering things like synthetic to real transfer of methods. So they couldn't train probes, classifiers, things like that on actual customer data of PII. So what they had to do is use synthetic data sets. And then hope that that transfer is out of domain to real data sets. And so we can evaluate performance on the real data sets, but not train on customer PII. So that right off the bat is like a big challenge. You have multilingual requirements. So this needed to work for both English and Japanese text. Japanese text has all sorts of quirks, including tokenization behaviors that caused lots of bugs that caused us to be pulling our hair out. And then also a lot of tasks you'll see. You might make simplifying assumptions if you're sort of treating it as like the easiest version of the problem to just sort of get like general results where maybe you say you're classifying a sentence to say, does this contain PII? But the need that Rakuten had was token level classification so that you could precisely scrub out the PII. So as we learned more about the problem, you're sort of speaking about what that looks like in practice. Yeah. A lot of assumptions end up breaking. And that was just one instance where you. A problem that seems simple right off the bat ends up being more complex as you keep diving into it.Vibhu Sapra [00:20:41]: Excellent. One of the things that's also interesting with Interp is a lot of these methods are very efficient, right? So where you're just looking at a model's internals itself compared to a separate like guardrail, LLM as a judge, a separate model. One, you have to host it. Two, there's like a whole latency. So if you use like a big model, you have a second call. Some of the work around like self detection of hallucination, it's also deployed for efficiency, right? So if you have someone like Rakuten doing it in production live, you know, that's just another thing people should consider.Mark Bissell [00:21:12]: Yeah. And something like a probe is super lightweight. Yeah. It's no extra latency really. Excellent.Shawn Wang [00:21:17]: You have the steering demos lined up. So we were just kind of see what you got. I don't, I don't actually know if this is like the latest, latest or like alpha thing.Mark Bissell [00:21:26]: No, this is a pretty hacky demo from from a presentation that someone else on the team recently gave. So this will give a sense for, for technology. So you can see the steering and action. Honestly, I think the biggest thing that this highlights is that as we've been growing as a company and taking on kind of more and more ambitious versions of interpretability related problems, a lot of that comes to scaling up in various different forms. And so here you're going to see steering on a 1 trillion parameter model. This is Kimi K2. And so it's sort of fun that in addition to the research challenges, there are engineering challenges that we're now tackling. Cause for any of this to be sort of useful in production, you need to be thinking about what it looks like when you're using these methods on frontier models as opposed to sort of like toy kind of model organisms. So yeah, this was thrown together hastily, pretty fragile behind the scenes, but I think it's quite a fun demo. So screen sharing is on. So I've got two terminal sessions pulled up here. On the left is a forked version that we have of the Kimi CLI that we've got running to point at our custom hosted Kimi model. And then on the right is a set up that will allow us to steer on certain concepts. So I should be able to chat with Kimi over here. Tell it hello. This is running locally. So the CLI is running locally, but the Kimi server is running back to the office. Well, hopefully should be, um, that's too much to run on that Mac. Yeah. I think it's, uh, it takes a full, like each 100 node. I think it's like, you can. You can run it on eight GPUs, eight 100. So, so yeah, Kimi's running. We can ask it a prompt. It's got a forked version of our, uh, of the SG line code base that we've been working on. So I'm going to tell it, Hey, this SG line code base is slow. I think there's a bug. Can you try to figure it out? There's a big code base, so it'll, it'll spend some time doing this. And then on the right here, I'm going to initialize in real time. Some steering. Let's see here.Mark Bissell [00:23:33]: searching for any. Bugs. Feature ID 43205.Shawn Wang [00:23:38]: Yeah.Mark Bissell [00:23:38]: 20, 30, 40. So let me, uh, this is basically a feature that we found that inside Kimi seems to cause it to speak in Gen Z slang. And so on the left, it's still sort of thinking normally it might take, I don't know, 15 seconds for this to kick in, but then we're going to start hopefully seeing him do this code base is massive for real. So we're going to start. We're going to start seeing Kimi transition as the steering kicks in from normal Kimi to Gen Z Kimi and both in its chain of thought and its actual outputs.Mark Bissell [00:24:19]: And interestingly, you can see, you know, it's still able to call tools, uh, and stuff. It's um, it's purely sort of it's it's demeanor. And there are other features that we found for interesting things like concision. So that's more of a practical one. You can make it more concise. Um, the types of programs, uh, programming languages that uses, but yeah, as we're seeing it come in. Pretty good. Outputs.Shawn Wang [00:24:43]: Scheduler code is actually wild.Vibhu Sapra [00:24:46]: Yo, this code is actually insane, bro.Vibhu Sapra [00:24:53]: What's the process of training in SAE on this, or, you know, how do you label features? I know you guys put out a pretty cool blog post about, um, finding this like autonomous interp. Um, something. Something about how agents for interp is different than like coding agents. I don't know while this is spewing up, but how, how do we find feature 43, two Oh five. Yeah.Mark Bissell [00:25:15]: So in this case, um, we, our platform that we've been building out for a long time now supports all the sort of classic out of the box interp techniques that you might want to have like SAE training, probing things of that kind, I'd say the techniques for like vanilla SAEs are pretty well established now where. You take your model that you're interpreting, run a whole bunch of data through it, gather activations, and then yeah, pretty straightforward pipeline to train an SAE. There are a lot of different varieties. There's top KSAEs, batch top KSAEs, um, normal ReLU SAEs. And then once you have your sparse features to your point, assigning labels to them to actually understand that this is a gen Z feature, that's actually where a lot of the kind of magic happens. Yeah. And the most basic standard technique is look at all of your d input data set examples that cause this feature to fire most highly. And then you can usually pick out a pattern. So for this feature, If I've run a diverse enough data set through my model feature 43, two Oh five. Probably tends to fire on all the tokens that sounds like gen Z slang. You know, that's the, that's the time of year to be like, Oh, I'm in this, I'm in this Um, and, um, so, you know, you could have a human go through all 43,000 concepts andVibhu Sapra [00:26:34]: And I've got to ask the basic question, you know, can we get examples where it hallucinates, pass it through, see what feature activates for hallucinations? Can I just, you know, turn hallucination down?Myra Deng [00:26:51]: Oh, wow. You really predicted a project we're already working on right now, which is detecting hallucinations using interpretability techniques. And this is interesting because hallucinations is something that's very hard to detect. And it's like a kind of a hairy problem and something that black box methods really struggle with. Whereas like Gen Z, you could always train a simple classifier to detect that hallucinations is harder. But we've seen that models internally have some... Awareness of like uncertainty or some sort of like user pleasing behavior that leads to hallucinatory behavior. And so, yeah, we have a project that's trying to detect that accurately. And then also working on mitigating the hallucinatory behavior in the model itself as well.Shawn Wang [00:27:39]: Yeah, I would say most people are still at the level of like, oh, I would just turn temperature to zero and that turns off hallucination. And I'm like, well, that's a fundamental misunderstanding of how this works. Yeah.Mark Bissell [00:27:51]: Although, so part of what I like about that question is you, there are SAE based approaches that might like help you get at that. But oftentimes the beauty of SAEs and like we said, the curse is that they're unsupervised. So when you have a behavior that you deliberately would like to remove, and that's more of like a supervised task, often it is better to use something like probes and specifically target the thing that you're interested in reducing as opposed to sort of like hoping that when you fragment the latent space, one of the vectors that pops out.Vibhu Sapra [00:28:20]: And as much as we're training an autoencoder to be sparse, we're not like for sure certain that, you know, we will get something that just correlates to hallucination. You'll probably split that up into 20 other things and who knows what they'll be.Mark Bissell [00:28:36]: Of course. Right. Yeah. So there's no sort of problems with like feature splitting and feature absorption. And then there's the off target effects, right? Ideally, you would want to be very precise where if you reduce the hallucination feature, suddenly maybe your model can't write. Creatively anymore. And maybe you don't like that, but you want to still stop it from hallucinating facts and figures.Shawn Wang [00:28:55]: Good. So Vibhu has a paper to recommend there that we'll put in the show notes. But yeah, I mean, I guess just because your demo is done, any any other things that you want to highlight or any other interesting features you want to show?Mark Bissell [00:29:07]: I don't think so. Yeah. Like I said, this is a pretty small snippet. I think the main sort of point here that I think is exciting is that there's not a whole lot of inter being applied to models quite at this scale. You know, Anthropic certainly has some some. Research and yeah, other other teams as well. But it's it's nice to see these techniques, you know, being put into practice. I think not that long ago, the idea of real time steering of a trillion parameter model would have sounded.Shawn Wang [00:29:33]: Yeah. The fact that it's real time, like you started the thing and then you edited the steering vector.Vibhu Sapra [00:29:38]: I think it's it's an interesting one TBD of what the actual like production use case would be on that, like the real time editing. It's like that's the fun part of the demo, right? You can kind of see how this could be served behind an API, right? Like, yes, you're you only have so many knobs and you can just tweak it a bit more. And I don't know how it plays in. Like people haven't done that much with like, how does this work with or without prompting? Right. How does this work with fine tuning? Like, there's a whole hype of continual learning, right? So there's just so much to see. Like, is this another parameter? Like, is it like parameter? We just kind of leave it as a default. We don't use it. So I don't know. Maybe someone here wants to put out a guide on like how to use this with prompting when to do what?Mark Bissell [00:30:18]: Oh, well, I have a paper recommendation. I think you would love from Act Deep on our team, who is an amazing researcher, just can't say enough amazing things about Act Deep. But he actually has a paper that as well as some others from the team and elsewhere that go into the essentially equivalence of activation steering and in context learning and how those are from a he thinks of everything in a cognitive neuroscience Bayesian framework, but basically how you can precisely show how. Prompting in context, learning and steering exhibit similar behaviors and even like get quantitative about the like magnitude of steering you would need to do to induce a certain amount of behavior similar to certain prompting, even for things like jailbreaks and stuff. It's a really cool paper. Are you saying steering is less powerful than prompting? More like you can almost write a formula that tells you how to convert between the two of them.Myra Deng [00:31:20]: And so like formally equivalent actually in the in the limit. Right.Mark Bissell [00:31:24]: So like one case study of this is for jailbreaks there. I don't know. Have you seen the stuff where you can do like many shot jailbreaking? You like flood the context with examples of the behavior. And the topic put out that paper.Shawn Wang [00:31:38]: A lot of people were like, yeah, we've been doing this, guys.Mark Bissell [00:31:40]: Like, yeah, what's in this in context learning and activation steering equivalence paper is you can like predict the number. Number of examples that you will need to put in there in order to jailbreak the model. That's cool. By doing steering experiments and using this sort of like equivalence mapping. That's cool. That's really cool. It's very neat. Yeah.Shawn Wang [00:32:02]: I was going to say, like, you know, I can like back rationalize that this makes sense because, you know, what context is, is basically just, you know, it updates the KV cache kind of and like and then every next token inference is still like, you know, the sheer sum of everything all the way. It's plus all the context. It's up to date. And you could, I guess, theoretically steer that with you probably replace that with your steering. The only problem is steering typically is on one layer, maybe three layers like like you did. So it's like not exactly equivalent.Mark Bissell [00:32:33]: Right, right. There's sort of you need to get precise about, yeah, like how you sort of define steering and like what how you're modeling the setup. But yeah, I've got the paper pulled up here. Belief dynamics reveal the dual nature. Yeah. The title is Belief Dynamics Reveal the Dual Nature of Incompetence. And it's an exhibition of the practical context learning and activation steering. So Eric Bigelow, Dan Urgraft on the who are doing fellowships at Goodfire, Ekt Deep's the final author there.Myra Deng [00:32:59]: I think actually to your question of like, what is the production use case of steering? I think maybe if you just think like one level beyond steering as it is today. Like imagine if you could adapt your model to be, you know, an expert legal reasoner. Like in almost real time, like very quickly. efficiently using human feedback or using like your semantic understanding of what the model knows and where it knows that behavior. I think that while it's not clear what the product is at the end of the day, it's clearly very valuable. Thinking about like what's the next interface for model customization and adaptation is a really interesting problem for us. Like we have heard a lot of people actually interested in fine-tuning an RL for open weight models in production. And so people are using things like Tinker or kind of like open source libraries to do that, but it's still very difficult to get models fine-tuned and RL'd for exactly what you want them to do unless you're an expert at model training. And so that's like something we'reShawn Wang [00:34:06]: looking into. Yeah. I never thought so. Tinker from Thinking Machines famously uses rank one LoRa. Is that basically the same as steering? Like, you know, what's the comparison there?Mark Bissell [00:34:19]: Well, so in that case, you are still applying updates to the parameters, right?Shawn Wang [00:34:25]: Yeah. You're not touching a base model. You're touching an adapter. It's kind of, yeah.Mark Bissell [00:34:30]: Right. But I guess it still is like more in parameter space then. I guess it's maybe like, are you modifying the pipes or are you modifying the water flowing through the pipes to get what you're after? Yeah. Just maybe one way.Mark Bissell [00:34:44]: I like that analogy. That's my mental map of it at least, but it gets at this idea of model design and intentional design, which is something that we're, that we're very focused on. And just the fact that like, I hope that we look back at how we're currently training models and post-training models and just think what a primitive way of doing that right now. Like there's no intentionalityShawn Wang [00:35:06]: really in... It's just data, right? The only thing in control is what data we feed in.Mark Bissell [00:35:11]: So, so Dan from Goodfire likes to use this analogy of, you know, he has a couple of young kids and he talks about like, what if I could only teach my kids how to be good people by giving them cookies or like, you know, giving them a slap on the wrist if they do something wrong, like not telling them why it was wrong or like what they should have done differently or something like that. Just figure it out. Right. Exactly. So that's RL. Yeah. Right. And, and, you know, it's sample inefficient. There's, you know, what do they say? It's like slurping feedback. It's like, slurping supervision. Right. And so you'd like to get to the point where you can have experts giving feedback to their models that are, uh, internalized and, and, you know, steering is an inference time way of sort of getting that idea. But ideally you're moving to a world whereVibhu Sapra [00:36:04]: it is much more intentional design in perpetuity for these models. Okay. This is one of the questions we asked Emmanuel from Anthropic on the podcast a few months ago. Basically the question, was you're at a research lab that does model training, foundation models, and you're on an interp team. How does it tie back? Right? Like, does this, do ideas come from the pre-training team? Do they go back? Um, you know, so for those interested, you can, you can watch that. There wasn't too much of a connect there, but it's still something, you know, it's something they want toMark Bissell [00:36:33]: push for down the line. It can be useful for all of the above. Like there are certainly post-hocVibhu Sapra [00:36:39]: use cases where it doesn't need to touch that. I think the other thing a lot of people forget is this stuff isn't too computationally expensive, right? Like I would say, if you're interested in getting into research, MechInterp is one of the most approachable fields, right? A lot of this train an essay, train a probe, this stuff, like the budget for this one, there's already a lot done. There's a lot of open source work. You guys have done some too. Um, you know,Shawn Wang [00:37:04]: There's like notebooks from the Gemini team for Neil Nanda or like, this is how you do it. Just step through the notebook.Vibhu Sapra [00:37:09]: Even if you're like, not even technical with any of this, you can still make like progress. There, you can look at different activations, but, uh, if you do want to get into training, you know, training this stuff, correct me if I'm wrong is like in the thousands of dollars, not even like, it's not that high scale. And then same with like, you know, applying it, doing it for post-training or all this stuff is fairly cheap in scale of, okay. I want to get into like model training. I don't have compute for like, you know, pre-training stuff. So it's, it's a very nice field to get into. And also there's a lot of like open questions, right? Um, some of them have to go with, okay, I want a product. I want to solve this. Like there's also just a lot of open-ended stuff that people could work on. That's interesting. Right. I don't know if you guys have any calls for like, what's open questions, what's open work that you either open collaboration with, or like, you'd just like to see solved or just, you know, for people listening that want to get into McInturk because people always talk about it. What are, what are the things they should check out? Start, of course, you know, join you guys as well. I'm sure you're hiring.Myra Deng [00:38:09]: There's a paper, I think from, was it Lee, uh, Sharky? It's open problems and, uh, it's, it's a bit of interpretability, which I recommend everyone who's interested in the field. Read. I'm just like a really comprehensive overview of what are the things that experts in the field think are the most important problems to be solved. I also think to your point, it's been really, really inspiring to see, I think a lot of young people getting interested in interpretability, actually not just young people also like scientists to have been, you know, experts in physics for many years and in biology or things like this, um, transitioning into interp, because the barrier of, of what's now interp. So it's really cool to see a number to entry is, you know, in some ways low and there's a lot of information out there and ways to get started. There's this anecdote of like professors at universities saying that all of a sudden every incoming PhD student wants to study interpretability, which was not the case a few years ago. So it just goes to show how, I guess, like exciting the field is, how fast it's moving, how quick it is to get started and things like that.Mark Bissell [00:39:10]: And also just a very welcoming community. You know, there's an open source McInturk Slack channel. There are people are always posting questions and just folks in the space are always responsive if you ask things on various forums and stuff. But yeah, the open paper, open problems paper is a really good one.Myra Deng [00:39:28]: For other people who want to get started, I think, you know, MATS is a great program. What's the acronym for? Machine Learning and Alignment Theory Scholars? It's like the...Vibhu Sapra [00:39:40]: Normally summer internship style.Myra Deng [00:39:42]: Yeah, but they've been doing it year round now. And actually a lot of our full-time staff have come through that program or gone through that program. And it's great for anyone who is transitioning into interpretability. There's a couple other fellows programs. We do one as well as Anthropic. And so those are great places to get started if anyone is interested.Mark Bissell [00:40:03]: Also, I think been seen as a research field for a very long time. But I think engineering... I think engineers are sorely wanted for interpretability as well, especially at Goodfire, but elsewhere, as it does scale up.Shawn Wang [00:40:18]: I should mention that Lee actually works with you guys, right? And in the London office and I'm adding our first ever McInturk track at AI Europe because I see this industry applications now emerging. And I'm pretty excited to, you know, help push that along. Yeah, I was looking forward to that. It'll effectively be the first industry McInturk conference. Yeah. I'm so glad you added that. You know, it's still a little bit of a bet. It's not that widespread, but I can definitely see this is the time to really get into it. We want to be early on things.Mark Bissell [00:40:51]: For sure. And I think the field understands this, right? So at ICML, I think the title of the McInturk workshop this year was actionable interpretability. And there was a lot of discussion around bringing it to various domains. Everyone's adding pragmatic, actionable, whatever.Shawn Wang [00:41:10]: It's like, okay, well, we weren't actionable before, I guess. I don't know.Vibhu Sapra [00:41:13]: And I mean, like, just, you know, being in Europe, you see the Interp room. One, like old school conferences, like, I think they had a very tiny room till they got lucky and they got it doubled. But there's definitely a lot of interest, a lot of niche research. So you see a lot of research coming out of universities, students. We covered the paper last week. It's like two unknown authors, not many citations. But, you know, you can make a lot of meaningful work there. Yeah. Yeah. Yeah.Shawn Wang [00:41:39]: Yeah. I think people haven't really mentioned this yet. It's just Interp for code. I think it's like an abnormally important field. We haven't mentioned this yet. The conspiracy theory last two years ago was when the first SAE work came out of Anthropic was they would do like, oh, we just used SAEs to turn the bad code vector down and then turn up the good code. And I think like, isn't that the dream? Like, you know, like, but basically, I guess maybe, why is it funny? Like, it's... If it was realistic, it would not be funny. It would be like, no, actually, we should do this. But it's funny because we know there's like, we feel there's some limitations to what steering can do. And I think a lot of the public image of steering is like the Gen Z stuff. Like, oh, you can make it really love the Golden Gate Bridge, or you can make it speak like Gen Z. To like be a legal reasoner seems like a huge stretch. Yeah. And I don't know if that will get there this way. Yeah.Myra Deng [00:42:36]: I think, um, I will say we are announcing. Something very soon that I will not speak too much about. Um, but I think, yeah, this is like what we've run into again and again is like, we, we don't want to be in the world where steering is only useful for like stylistic things. That's definitely not, not what we're aiming for. But I think the types of interventions that you need to do to get to things like legal reasoning, um, are much more sophisticated and require breakthroughs in, in learning algorithms. And that's, um...Shawn Wang [00:43:07]: And is this an emergent property of scale as well?Myra Deng [00:43:10]: I think so. Yeah. I mean, I think scale definitely helps. I think scale allows you to learn a lot of information and, and reduce noise across, you know, large amounts of data. But I also think we think that there's ways to do things much more effectively, um, even, even at scale. So like actually learning exactly what you want from the data and not learning things that you do that you don't want exhibited in the data. So we're not like anti-scale, but we are also realizing that scale is not going to get us anywhere. It's not going to get us to the type of AI development that we want to be at in, in the future as these models get more powerful and get deployed in all these sorts of like mission critical contexts. Current life cycle of training and deploying and evaluations is, is to us like deeply broken and has opportunities to, to improve. So, um, more to come on that very, very soon.Mark Bissell [00:44:02]: And I think that that's a use basically, or maybe just like a proof point that these concepts do exist. Like if you can manipulate them in the precise best way, you can get the ideal combination of them that you desire. And steering is maybe the most coarse grained sort of peek at what that looks like. But I think it's evocative of what you could do if you had total surgical control over every concept, every parameter. Yeah, exactly.Myra Deng [00:44:30]: There were like bad code features. I've got it pulled up.Vibhu Sapra [00:44:33]: Yeah. Just coincidentally, as you guys are talking.Shawn Wang [00:44:35]: This is like, this is exactly.Vibhu Sapra [00:44:38]: There's like specifically a code error feature that activates and they show, you know, it's not, it's not typo detection. It's like, it's, it's typos in code. It's not typical typos. And, you know, you can, you can see it clearly activates where there's something wrong in code. And they have like malicious code, code error. They have a whole bunch of sub, you know, sub broken down little grain features. Yeah.Shawn Wang [00:45:02]: Yeah. So, so the, the rough intuition for me, the, why I talked about post-training was that, well, you just, you know, have a few different rollouts with all these things turned off and on and whatever. And then, you know, you can, that's, that's synthetic data you can kind of post-train on. Yeah.Vibhu Sapra [00:45:13]: And I think we make it sound easier than it is just saying, you know, they do the real hard work.Myra Deng [00:45:19]: I mean, you guys, you guys have the right idea. Exactly. Yeah. We replicated a lot of these features in, in our Lama models as well. I remember there was like.Vibhu Sapra [00:45:26]: And I think a lot of this stuff is open, right? Like, yeah, you guys opened yours. DeepMind has opened a lot of essays on Gemma. Even Anthropic has opened a lot of this. There's, there's a lot of resources that, you know, we can probably share of people that want to get involved.Shawn Wang [00:45:41]: Yeah. And special shout out to like Neuronpedia as well. Yes. Like, yeah, amazing piece of work to visualize those things.Myra Deng [00:45:49]: Yeah, exactly.Shawn Wang [00:45:50]: I guess I wanted to pivot a little bit on, onto the healthcare side, because I think that's a big use case for you guys. We haven't really talked about it yet. This is a bit of a crossover for me because we are, we are, we do have a separate science pod that we're starting up for AI, for AI for science, just because like, it's such a huge investment category and also I'm like less qualified to do it, but we actually have bio PhDs to cover that, which is great, but I need to just kind of recover, recap your work, maybe on the evil two stuff, but then, and then building forward.Mark Bissell [00:46:17]: Yeah, for sure. And maybe to frame up the conversation, I think another kind of interesting just lens on interpretability in general is a lot of the techniques that were described. are ways to solve the AI human interface problem. And it's sort of like bidirectional communication is the goal there. So what we've been talking about with intentional design of models and, you know, steering, but also more advanced techniques is having humans impart our desires and control into models and over models. And the reverse is also very interesting, especially as you get to superhuman models, whether that's narrow superintelligence, like these scientific models that work on genomics, data, medical imaging, things like that. But down the line, you know, superintelligence of other forms as well. What knowledge can the AIs teach us as sort of that, that the other direction in that? And so some of our life science work to date has been getting at exactly that question, which is, well, some of it does look like debugging these various life sciences models, understanding if they're actually performing well, on tasks, or if they're picking up on spurious correlations, for instance, genomics models, you would like to know whether they are sort of focusing on the biologically relevant things that you care about, or if it's using some simpler correlate, like the ancestry of the person that it's looking at. But then also in the instances where they are superhuman, and maybe they are understanding elements of the human genome that we don't have names for or specific, you know, yeah, discoveries that they've made that that we don't know about, that's, that's a big goal. And so we're already seeing that, right, we are partnered with organizations like Mayo Clinic, leading research health system in the United States, our Institute, as well as a startup called Prima Menta, which focuses on neurodegenerative disease. And in our partnership with them, we've used foundation models, they've been training and applied our interpretability techniques to find novel biomarkers for Alzheimer's disease. So I think this is just the tip of the iceberg. But it's, that's like a flavor of some of the things that we're working on.Shawn Wang [00:48:36]: Yeah, I think that's really fantastic. Obviously, we did the Chad Zuckerberg pod last year as well. And like, there's a plethora of these models coming out, because there's so much potential and research. And it's like, very interesting how it's basically the same as language models, but just with a different underlying data set. But it's like, it's the same exact techniques. Like, there's no change, basically.Mark Bissell [00:48:59]: Yeah. Well, and even in like other domains, right? Like, you know, robotics, I know, like a lot of the companies just use Gemma as like the like backbone, and then they like make it into a VLA that like takes these actions. It's, it's, it's transformers all the way down. So yeah.Vibhu Sapra [00:49:15]: Like we have Med Gemma now, right? Like this week, even there was Med Gemma 1.5. And they're training it on this stuff, like 3d scans, medical domain knowledge, and all that stuff, too. So there's a push from both sides. But I think the thing that, you know, one of the things about McInturpp is like, you're a little bit more cautious in some domains, right? So healthcare, mainly being one, like guardrails, understanding, you know, we're more risk adverse to something going wrong there. So even just from a basic understanding, like, if we're trusting these systems to make claims, we want to know why and what's going on.Myra Deng [00:49:51]: Yeah, I think there's totally a kind of like deployment bottleneck to actually using. foundation models for real patient usage or things like that. Like, say you're using a model for rare disease prediction, you probably want some explanation as to why your model predicted a certain outcome, and an interpretable explanation at that. So that's definitely a use case. But I also think like, being able to extract scientific information that no human knows to accelerate drug discovery and disease treatment and things like that actually is a really, really big unlock for science, like scientific discovery. And you've seen a lot of startups, like say that they're going to accelerate scientific discovery. And I feel like we actually are doing that through our interp techniques. And kind of like, almost by accident, like, I think we got reached out to very, very early on from these healthcare institutions. And none of us had healthcare.Shawn Wang [00:50:49]: How did they even hear of you? A podcast.Myra Deng [00:50:51]: Oh, okay. Yeah, podcast.Vibhu Sapra [00:50:53]: Okay, well, now's that time, you know.Myra Deng [00:50:55]: Everyone can call us.Shawn Wang [00:50:56]: Podcasts are the most important thing. Everyone should listen to podcasts.Myra Deng [00:50:59]: Yeah, they reached out. They were like, you know, we have these really smart models that we've trained, and we want to know what they're doing. And we were like, really early that time, like three months old, and it was a few of us. And we were like, oh, my God, we've never used these models. Let's figure it out. But it's also like, great proof that interp techniques scale pretty well across domains. We didn't really have to learn too much about.Shawn Wang [00:51:21]: Interp is a machine learning technique, machine learning skills everywhere, right? Yeah. And it's obviously, it's just like a general insight. Yeah. Probably to finance too, I think, which would be fun for our history. I don't know if you have anything to say there.Mark Bissell [00:51:34]: Yeah, well, just across the science. Like, we've also done work on material science. Yeah, it really runs the gamut.Vibhu Sapra [00:51:40]: Yeah. Awesome. And, you know, for those that should reach out, like, you're obviously experts in this, but like, is there a call out for people that you're looking to partner with, design partners, people to use your stuff outside of just, you know, the general developer that wants to. Plug and play steering stuff, like on the research side more so, like, are there ideal design partners, customers, stuff like that?Myra Deng [00:52:03]: Yeah, I can talk about maybe non-life sciences, and then I'm curious to hear from you on the life sciences side. But we're looking for design partners across many domains, language, anyone who's customizing language models or trying to push the frontier of code or reasoning models is really interesting to us. And then also interested in the frontier of modeling. There's a lot of models that work in, like, pixel space, as we call it. So if you're doing world models, video models, even robotics, where there's not a very clean natural language interface to interact with, I think we think that Interp can really help and are looking for a few partners in that space.Shawn Wang [00:52:43]: Just because you mentioned the keyword

No Representation, No Generalization: Health Equity in Research

In this episode, Jess and Sarah welcome Dr. Kate Wallis and Dr. Diana Montoya-Williams to explore the essential topic of health equity in scientific research. The scientists examine the critical importance of rigorous research design and the transformative role of community engagement in conducting meaningful health studies. They address common methodological mistakes that compromise research validity, particularly focusing on how race and ethnicity are contextualized in scientific studies. Throughout the conversation, there is an emphasis on the need for greater transparency in research practices and how community involvement strengthens both the quality and relevance of scientific work. Despite acknowledging significant challenges in achieving health equity, the episode concludes on a hopeful note by highlighting the power of community solidarity and engagement in advancing public health outcomes. Watch the conversation on YouTube: https://youtu.be/p726HlABGRI (00:00) Intro & Public Health Update (04:22) What's A Health/Science News Item That Caught Your Attention? (06:43) A Collaborative Project About How Science Has Failed Certain Communities (12:04) Common Mistakes In Research Validity (16:24) Understanding Race & Ethnicity In Research (21:25) What Does True Community Engagement Look Like? (30:07) What's Giving You Hope In Public Health And Science Right Now? https://www.inquirer.com/health/expert-opinions/autism-treatments-myths-fda-cdc-changes-20251204.html https://publications.aap.org/pediatricsopenscience/article/2/1/1/205504/Consensus-Recommendations-for-Antiracist-Child?searchresult=1 ----------------------------------------------------------------------------------------------------------------------- Interested in advertising with us? Please reach out to advertising@airwavemedia.com, with “Unbiased Science” in the subject line. PLEASE NOTE: The discussion and information provided in this podcast are for general educational, scientific, and informational purposes only and are not intended as, and should not be treated as, medical or other professional advice for any particular individual or individuals. Every person and medical issue is different, and diagnosis and treatment requires consideration of specific facts often unique to the individual. As such, the information contained in this podcast should not be used as a substitute for consultation with and/or treatment by a doctor or other medical professional. If you are experiencing any medical issue or have any medical concern, you should consult with a doctor or other medical professional. Further, due to the inherent limitations of a podcast such as this as well as ongoing scientific developments, we do not guarantee the completeness or accuracy of the information or analysis provided in this podcast, although, of course we always endeavor to provide comprehensive information and analysis. In no event may Unbiased Science or any of the participants in this podcast be held liable to the listener or anyone else for any decision allegedly made or action allegedly taken or not taken allegedly in reliance on the discussion or information in this podcast or for any damages allegedly resulting from such reliance. The information provided herein do not represent the views of our employers. Learn more about your ad choices. Visit megaphone.fm/adchoices

Ep 81: Ex-OpenAI Researcher On Why He Left, His Honest AGI Timeline, & The Limits of Scaling RL

This episode features Jerry Tworek, a key architect behind OpenAI's breakthrough reasoning models (o1, o3) and Codex, discussing the current state and future of AI. Jerry explores the real limits and promise of scaling pre-training and reinforcement learning, arguing that while these paradigms deliver predictable improvements, they're fundamentally constrained by data availability and struggle with generalization beyond their training objectives. He reveals his updated belief that continual learning—the ability for models to update themselves based on failure and work through problems autonomously—is necessary for AGI, as current models hit walls and become "hopeless" when stuck. Jerry discusses the convergence of major labs toward similar approaches driven by economic forces, the tension between exploration and exploitation in research, and why he left OpenAI to pursue new research directions. He offers candid insights on the competitive dynamics between labs, the focus required to win in specific domains like coding, what makes great AI researchers, and his surprisingly near-term predictions for robotics (2-3 years) while warning about the societal implications of widespread work automation that we're not adequately preparing for. (0:00) Intro(1:26) Scaling Paradigms in AI(3:36) Challenges in Reinforcement Learning(11:48) AGI Timelines(18:36) Converging Labs(25:05) Jerry's Departure from OpenAI(31:18) Pivotal Decisions in OpenAI's Journey(35:06) Balancing Research and Product Development(38:42) The Future of AI Coding(41:33) Specialization vs. Generalization in AI(48:47) Hiring and Building Research Teams(55:21) Quickfire With your co-hosts: @jacobeffron - Partner at Redpoint, Former PM Flatiron Health @patrickachase - Partner at Redpoint, Former ML Engineer LinkedIn @ericabrescia - Former COO Github, Founder Bitnami (acq'd by VMWare) @jordan_segall - Partner at Redpoint

#148 The Ultimate Leash Training Manual | 5 Steps to a Well Behaved Dog

You've probably tried every trick in the book to stop your dog from pulling on the leash... Maybe you've switched collars three times, bought a "no-pull" harness, or even considered giving up on walks altogether...making you feel like you're the only owner who can't control their dog on a simple walk. However, that's not the case at all.We've developed a proven 5-phase system that transforms even the worst leash pullers into well-behaved walking partners.Today we're breaking down the exact progression from my new book "The Ultimate Leash Training Manual" that's helped thousands of dogs master loose leash walking.Recommended Training Equipment:

Best Ways to Build Better Habits & Break Bad Ones | James Clear

James Clear is an expert on behavioral change and habits and the author of the bestselling book Atomic Habits. We discuss the best ways to build new healthy habits and end bad ones without relying on motivation or willpower. Rather than list off categories of tools or acronyms, James explains how anchoring the changes you want to make in your identity and physical environment allows you to make desired changes quickly and ones that stick. Whether your goal is better fitness and physical health, productivity or mental health, you'll learn actionable, zero-cost protocols to build powerful and meaningful habits. Sponsors AG1: https://drinkag1.com/huberman Lingo: https://hellolingo.com/huberman Wealthfront*: https://wealthfront.com/huberman Joovv: https://joovv.com/huberman Eight Sleep: https://eightsleep.com/huberman Function: https://functionhealth.com/huberman Timestamps 00:00:00 James Clear 00:02:57 Common Habits, Tool: Habit Success & Getting Started 00:06:16 Make Starting a Habit Easier, Tool: 4 Laws of Behavior Change 00:10:18 Sponsors: Lingo & Wealthfront 00:13:26 Writing Habits, Seasons & Flexibility; Adaptability, Tool: Bad Day Plan 00:18:42 Consistency, Flow vs Grind, Master Showing Up, Learning & Practice 00:24:54 Chunking, Getting Started at Gym 00:28:01 Flow Don't Fight, Dissatisfaction & Effort, Tool: Identity-Based Habits 00:34:10 Friction, Competition & Effort; Credentials 00:39:38 Make Effort Rewarding, Mindset, Tools: Previsualization, Emphasize Positives 00:45:59 Sponsors: AG1 & Joovv 00:48:56 Reflection & Learning, Tool: Self-Testing; Perfectionism, Tool: Curiosity 00:55:18 Striving vs Relaxation, Balance, Tool: Turn On/Off; Hiking, Nature Reset 01:04:20 Identity & Professional Pursuits; Choosing New Projects; Clinging to Identity 01:14:24 Sponsor: Eight Sleep 01:15:42 Criticism; Identity & Growth 01:21:47 Failure, Identity, Sports, Tool: Rebounding & Reaching; Public Failures 01:30:03 Daily Habits, Tools: Day in Quarters; Never Miss Twice; Meal Timing 01:38:22 Daily Habit Timing & Sequencing, Tool: Mindfully Choose Inputs 01:45:37 Creativity, Specialization vs Generalization; Books 01:51:31 Sponsor: Function 01:53:18 Habits & Context, Environmental Cues, Tools for Minimizing Phone Use 02:02:01 Bad Habits, Checking Phone, Tools for Breaking Bad Habits 02:08:21 Physical & Social Environment, New Habits, Tool: Join/Create Groups 02:18:40 Family, Habits; Kids & Parenting, Tools: Stimulus; Good Conditions 02:26:05 Impact of Habits, Habits as Solutions; Upcoming Projects 02:32:45 Zero-Cost Support, YouTube, Spotify & Apple Follow, Reviews & Feedback, Sponsors, Protocols Book, Social Media, Neural Network Newsletter *This experience may not be representative of other Wealthfront clients, and there is no guarantee of future performance or success. Experiences will vary. The Cash Account, which is not a deposit account, is offered by Wealthfront Brokerage LLC, member FINRA/SIPC. Wealthfront Brokerage is not a bank. The base APY is 3.50% on cash deposits as of November 07, 2025, is representative, subject to change, and requires no minimum. If eligible for the overall boosted rate of 4.15% offered in connection with this promo, your boosted rate is also subject to change if the base rate decreases during the 3 month promo period. Funds in the Cash Account are swept to program banks, where it earns the variable APY. New Cash Account deposits are subject to a 2-4 day holding period before becoming available for transfer. Investment advisory services are provided by Wealthfront Advisers LLC, an SEC-registered investment adviser. Securities investments: not bank deposits, bank-guaranteed or FDIC-insured, and may lose value. Learn more about your ad choices. Visit megaphone.fm/adchoices

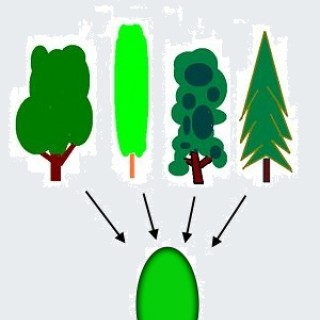

#147 - Old Skill, New Scenario: Using What You Already Know